Stanford’s linked data production project focuses on technical services workflows. For each of four key production pathways we will examine each step in the workflow, from acquisition to discovery, to determine how best to transition to a linked data production environment. Our emphasis is on following each workflow from start to finish to show an end-to-end linked data production process, and to highlight areas for future work. The four pathways are: copy cataloging through the Acquisitions Department, original cataloging, deposit of a single item into the Stanford Digital Repository, and deposit of a collection of resources into the Stanford Digital Repository.

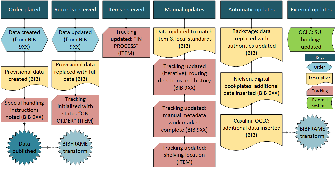

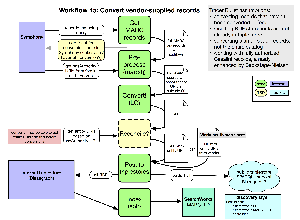

- Technical Services Workflows: Overview of Tracer Bullet project, with details on Tracer Bullet 1: Conversion of vendor-supplied MARC records (PowerPoint)

- Screenshots of tools and data used in Tracer Bullets 2-4: local instance of Library of Congress BIBFRAME Editor, Blazegraph triple store (PowerPoint)

Existing MARC workflows

Overview of existing MARC workflows

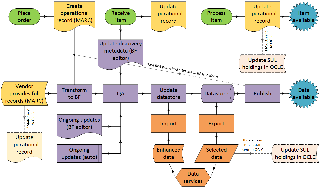

Firm orders

Approval orders

Receiving

Automatic record enhancements

MARC-to-BIBFRAME conversion workflow implemented by LD4P

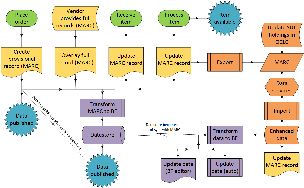

Conversion workflow overview

Described in Technical Services Workflows (PowerPoint)

Dataflow for conversion workflow

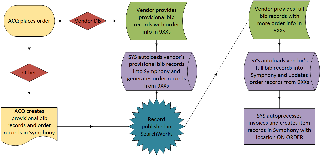

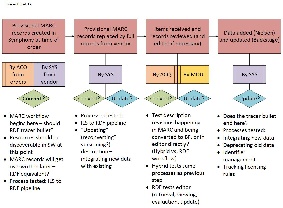

Exploratory MARC-to-BIBFRAME conversion workflows

Option 1: parallel processing in MARC and BIBFRAME, MARC record primary

Option 2: operational record in MARC, discovery data in BIBFRAME, BIBFRAME data primary

Conversion workflows by process

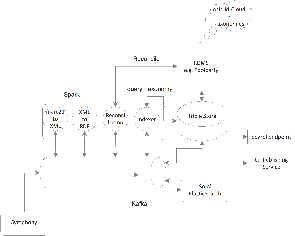

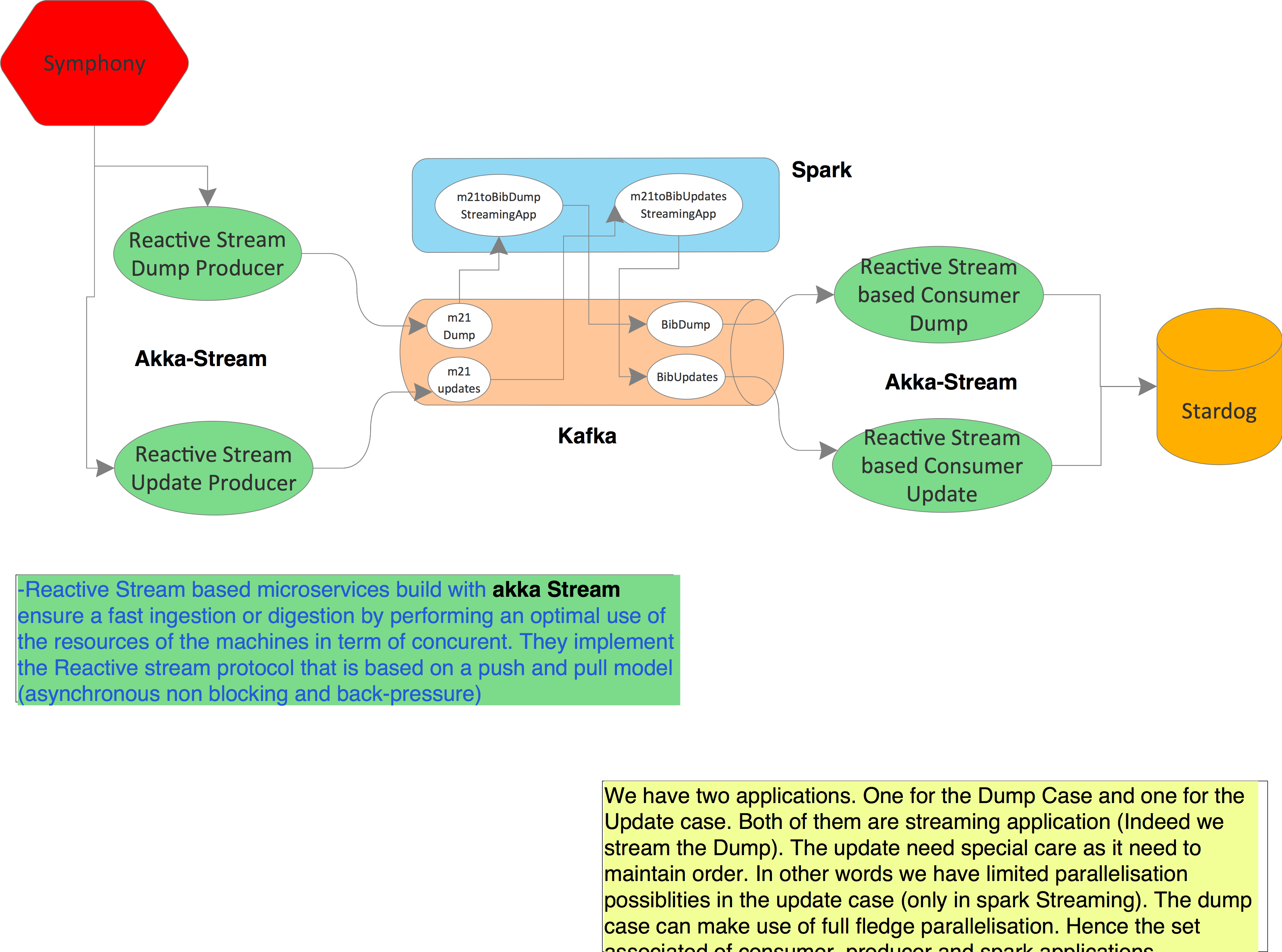

Reactive pipeline for larger-scale conversion of MARC records

Pipeline overview

Pipeline with implementation details

- Reactive pipeline demo (YouTube video)

- Scala/Kafka/Spark Linked Data Pipeline (github repository)

Supporting material

- User stories for converting MARC records to BIBFRAME (pdf)

- Plans for a Minimum MARC Bibliographic Record & Attachments (pdf)

- BIBFRAME-to-Solr mapping (Google doc)

- SPARQL queries for Solr mapping (pdf)

Workflow for original cataloging

Existing item-deposit workflows

Metadata created in self-deposit interface (non-SUL users)

Metadata created in Symphony/MARC (SUL users)

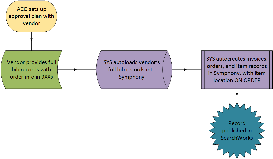

RDF-based item-deposit workflow

- CEDAR template for self-deposit item metadata (create CEDAR account to view)

- App to fetch folder contents from CEDAR and post them to a triplestore (github repository)

Existing bulk-deposit workflow

MODS-to-BIBFRAME bulk-deposit workflow

- Description of automated workflow to convert tabular MODS-based metadata for a digital collection to BIBFRAME (pdf)

- Sample tabular MODS-based metadata for digital collection (tsv)

- Mapping from tabular MODS-based metadata to BIBFRAME for sample collection (txt)

- Ruby script to convert tabular MODS-based metadata to BIBFRAME

- Resulting BIBFRAME metadata for digital collection (ttl)

Project Co-Managers

- Philip Schreur, Assistant University Librarian for Technical and Access Services

- Tom Cramer, Assistant University Librarian, Chief Technology Strategist, and Director of Digital Library Systems and Services

Acquisitions Department

- Alexis Manheim, Head of Aquisitions Department

- Linh Chang, Receiving and Access Librarian

Metadata Department

- Nancy Lorimer, Head of Metadata Department

- Joanna Dyla, Head of Medata Development Unit

- Vitus Tang, Head of Data Control and E-resources Unit

- Arcadia Falcone, Metadata Coordinator

Digital Library Systems and Services

- Darsi Rueda, Head of Library Systems Department

- Naomi Dushay, Digital Library Software Engineer

- Joshua Greben, Library Systems Programmer / Analyst

- Darren Weber, Digital Library Software Engineer

Analysis/Modeling

- Mapped Stanford's vendor-supplied copy cataloging and original cataloging workflows

- Mapped workflow for converting vendor-supplied records to linked data

- Generated requirements for work-based discovery environment, to take advantage of RDF

- Evaluated BIBFRAME profiles for original cataloging

Linked Data Creation

- Worked with vendor on improvements to supplied MARC data to enhance conversion to BIBFRAME

- Tracer Bullet 1: Converted set of 38,000 MARC records from Symphony to BIBFRAME using Library of Congress converter, loaded to Blazegraph triplestore, and indexed to Blacklight Solr environment via automated scripts

- Tracer Bullet 2: Created original descriptions of 50 items with local instance of BIBFRAME 2.0 Editor

- Tracer Bullet 3: Created original descriptions of about 30 digital assets using CEDAR RDF editor

- Tracer Bullet 4: Converted tabular MODS-based metadata for a digital collection to BIBFRAME using Ruby

- Piloted automated pipeline approach for conversion of MARC records to BIBFRAME, loading to triplestore, and indexing to Solr

Discovery Environment Creation

- Created Blacklight/Solr instance-based discovery environment with source data a mix of linked data and MARC data

- Developed a mapping from BIBFRAME 2.0 to Solr document for book materials

- Developed a mapping from RDF to Solr for digital assets

Tool Exploration / Requirements Definition

- Gathered requirements for conversion and editing tools

- Set up Registry of Tools

- Evaluated CEDAR template creation and metadata editing tool

- Developed a validation suite for MARC-to-RDF converters

- Created local instance of Library of Congress BIBFRAME 2.0 Editor

Presentations

- Technical Services Workflow Pipeline, Arcadia Falcone, LD4P Community Input Meeting 2017, Stanford, CA

- Linked Data for Production (LD4P): Technical services workflow evolution through tracer bullets (Stanford projects), Arcadia Falcone, Josh Greben, Nancy Lorimer, DCMI 2017, Washington, DC

- LD4P Tracer Bullet 1: an RDF-Based Copy-Cataloging Pipeline, Philip Schreur, ALA Annual 2017: LC BIBFRAME Update Chicago, IL

- The Shot Heard Round the World: Linked Data for Production's Tracer Bullet 1, Practical Copy-Cataloging in RDF, Philip Schreur ALA Annual 2017: Library Linked Data Interest Group Chicago, IL